AI Code Review Workflow with GitHub, Cursor, and Claude

Published on 2/18/2026

Last reviewed on 2/20/2026

By The Stash Editorial Team

A production-ready AI code review workflow for 2026 using GitHub, Cursor, and Claude with prompt standards, governance controls, and measurable quality KPIs.

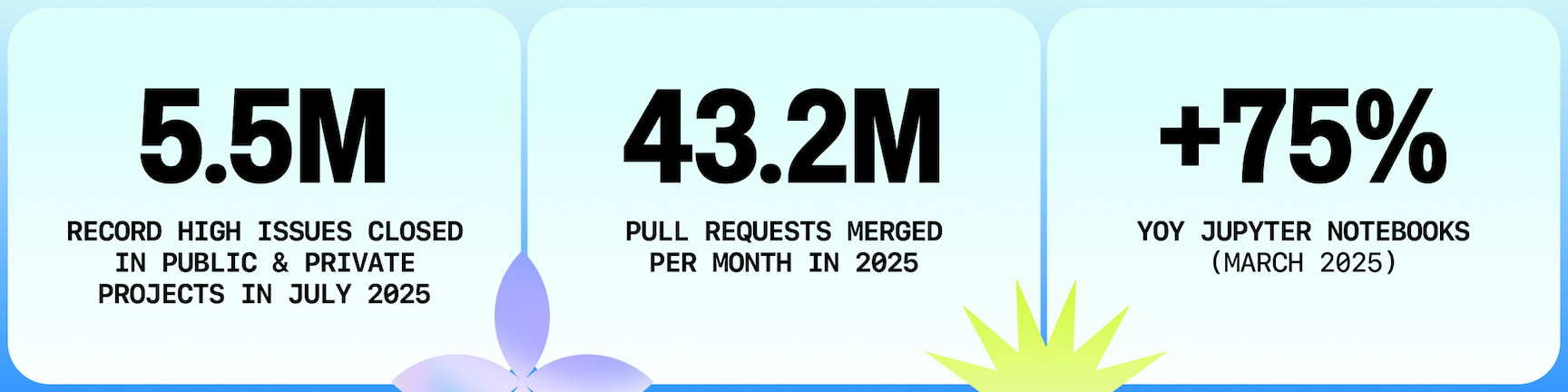

Research snapshot

Read time

~4 min

Sections

9 major sections

Visuals

3 total (2 infographics)

Sources

5 cited references

AI-assisted review can reduce cycle time, but only when the process is structured. Unstructured AI comments often add noise, duplicate obvious lint checks, and distract reviewers from risk-critical changes.

The goal is not "more comments." The goal is faster, higher-signal review quality.

This guide outlines a practical workflow for combining GitHub pull request controls with Cursor and Claude analysis so teams improve speed without weakening standards.

Where AI adds the most review value

AI performs best on repeatable review tasks:

- Highlighting missing test coverage on changed modules.

- Surfacing likely edge cases from diff patterns.

- Detecting risky dependency or config changes.

- Summarizing long diffs for reviewer orientation.

AI performs worst on domain-specific business logic judgment without context. Keep final risk decisions with human reviewers who understand system constraints and customer impact.

Standardize a pre-review prompt pack

Use a fixed prompt structure before human review begins. A strong baseline prompt asks AI to report:

- Behavior changes introduced by the diff.

- Potential regression risks and blast radius.

- Security and data-handling concerns.

- Missing tests with explicit suggestions.

- Rollback considerations.

Store this as a team template. Prompt consistency improves output consistency and makes AI signal easier to evaluate over time.

GitHub workflow design for AI + human review

Use AI before assigning human reviewers:

- 1. Open PR with structured summary.

- 2. Run CI and automated quality checks.

- 3. Run AI review template and capture findings.

- 4. Author resolves or annotates findings.

- 5. Human reviewer focuses on high-risk decisions.

- Keep branch protections, required checks, and approval rules active. AI is an accelerator layer, not a governance substitute.

Cursor and Claude role split

Teams get better results when tools have clear roles:

- Cursor: in-editor analysis and refactor drafting.

- Claude: deeper reasoning pass on architecture-level risk and test strategy.

- GitHub: source of truth for decision trail and merge controls.

Avoid tool-role overlap where everyone runs everything. That creates duplicated noise and weak accountability.

Security and reliability guardrails

Define hard boundaries up front:

- No auto-merge solely from AI approval.

- High-risk files require explicit senior reviewer sign-off.

- Security-sensitive changes require dedicated threat review.

- AI-suggested fixes must pass full CI before merge.

Use secure review guidance from OWASP and align acceptance risk with your service reliability targets.

KPI set for weekly operational review

Track workflow performance with a small KPI set:

- PR open-to-merge time.

- Re-opened PR percentage.

- Post-merge rollback frequency.

- Defect escape rate by severity.

- Reviewer time spent per merged PR.

If PR speed improves while rollback and defect metrics worsen, the workflow is over-optimized for throughput. Rebalance prompts and approval gates.

Enablement plan for reviewers and authors

Workflow quality depends on reviewer enablement, not just tool configuration.

Train authors and reviewers on the same operating method so AI findings are interpreted consistently. A lightweight enablement plan should include onboarding, calibration sessions, and monthly retrospective examples that compare strong versus weak review outcomes.

Use a repeatable enablement cadence:

- Week 1 onboarding on prompt pack and risk taxonomy.

- Week 2 shadow reviews with senior reviewer feedback.

- Week 3 independent reviews scored against rubric.

- Monthly calibration session on false positives and missed risks.

- Quarterly refresh when prompt templates or architecture changes.

Anchor this with external baselines such as NIST SSDF and performance measures from DORA metrics. Teams improve fastest when training and metrics are linked.

Prompt governance and drift control

Prompt libraries drift quickly when each team edits templates ad hoc. Treat prompts as versioned operational assets:

- Store prompts in version control with code owner review.

- Require change rationale and expected KPI impact per update.

- Run regression prompts before merging prompt changes.

- Track model version + prompt version together in review logs.

- Roll back prompt sets automatically if error budgets are exceeded.

This governance model keeps AI suggestions stable across repositories and reduces "random quality" behavior between teams. For adjacent implementation surfaces, align this process with LLM observability stack planning and AI coding assistants so monitoring, tooling, and review policy evolve together.

Finally, treat review workflow changes like product experiments: define baseline metrics, run limited rollouts, and compare results against a control period. Without controlled iteration, teams confuse novelty effects with true quality improvements.

Stable experimentation discipline is what turns AI review from ad hoc assistance into a dependable engineering capability.

Keep experiment scopes narrow: one team, one repository segment, one review template revision per cycle. Small controlled changes make causal impact easier to detect and reduce the risk of organization-wide quality regression from a single prompt update.

Capture each experiment outcome in a short weekly memo so prompt decisions remain auditable.

Final recommendation

The best AI code review systems are predictable systems: clear prompts, explicit tool roles, stable GitHub controls, and weekly KPI governance.

Teams that institutionalize this discipline usually gain both review speed and review quality.

For related decision pages, compare Cursor vs GitHub Copilot, review AI pair programming tools, and explore best issue tracking tools for developers.

Next Best Step

Get one high-signal tools brief per week

Weekly decisions for builders: what changed in AI and dev tooling, what to switch to, and which tools to avoid. One email. No noise.

Protected by reCAPTCHA. Google Privacy Policy and Terms of Service apply.

Or keep reading by intent

Sources & review

Reviewed on 2/20/2026