Best Coding Resources for Teams (2026 Decision Guide)

Published on 3/2/2026

Last reviewed on 3/2/2026

By The Stash Editorial Team

Coding Resources shortlist with fact/inference/recommendation framing, explicit tradeoffs, and source-backed implementation guidance for 2026.

Research snapshot

Read time

~11 min

Sections

18 major sections

Visuals

6 total (3 infographics)

Sources

12 cited references

Quick answer (2026-03-02): which coding resources options should teams shortlist now?

Shortlist tools that show clear implementation signals, predictable maintenance burden, and explicit integration paths. The safest picks tend to be the ones with observable implementation clarity and realistic maintenance costs. This guide is decision-first and optimized for high-intent evaluation workflows.

Quick verdict by scenario

Fact (2026-03-02): No single coding resources option consistently wins every workflow. Teams generally perform better with workflow-specific primary tools and one fallback path.

- Recommendation: Choose Get YouTube Thumbnails w/ Airtable Script · GitHub first when developers looking for practical code references; teams aligning on frontend best practices.

- Recommendation: Choose copaw first when developers looking for practical code references; teams aligning on frontend best practices.

- Recommendation: Choose Round Number to Nearest Integer · GitHub first when developers looking for practical code references; teams aligning on frontend best practices.

- Recommendation: Choose Flowclass first when developers looking for practical code references; teams aligning on frontend best practices.

- Recommendation: Choose pixel-agents first when developers looking for practical code references; teams aligning on frontend best practices.

Inference: A primary-plus-fallback operating model usually reduces continuity risk when pricing, policy, or reliability conditions change.

Internal paths: /category/coding | /latest | /collections | /compare | /alternatives

Related guides: /collections | /compare

Authority brief and decision context

Fact (2026-03-02): Search intent is decision-stage evaluation for coding resources with near-term implementation pressure.

Reader job-to-be-done: choose a tool that improves delivery speed without adding unbounded operational complexity.

Primary failure risk: selecting a tool on feature demos alone and discovering integration friction after rollout.

Topic coverage map for coding resources

Inference: Decision-stage content is most useful when it spans architecture, adoption, governance, economics, and execution risk rather than only feature snapshots.

- Integration risk and rollout sequencing

- Governance and ownership model

- Cost visibility and procurement controls

- Migration and rollback planning

- Operational reliability and incident handling

- Training and adoption design

- Measurement model and KPI alignment

- Long-term maintainability

Market evidence and visuals (2026-03-02)

Fact (2026-03-02): The visuals below are sourced from first-party benchmark reports to anchor this coding resources evaluation in external evidence, not opinion alone.

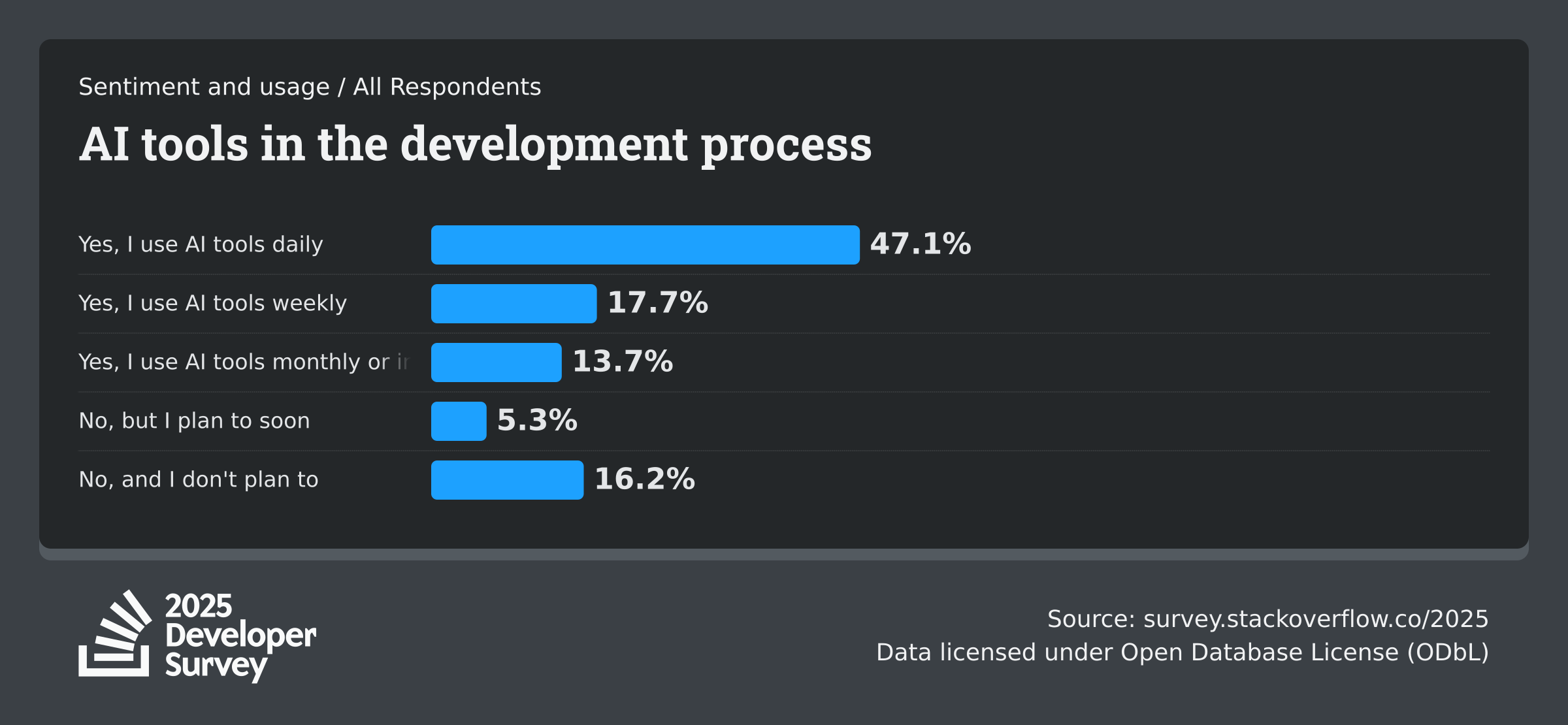

Stack Overflow - Developer Survey 2025 (AI)

Fact (2025-07-29): Annual developer sentiment dataset covering AI adoption, trust, and workflow impact.

GitHub - Octoverse 2025

Fact (2025-11-06): State-of-development report tracking developer growth and AI project adoption.

Google Cloud / DORA - DORA Report 2025

Fact (2025-01-01): Software delivery research on AI usage, platform engineering maturity, and delivery performance.

Evaluation criteria used in this draft

- Implementation effort and migration risk

- Integration depth across existing stack

- Time-to-value for first production workflow

- Governance controls and auditability

- Long-term maintenance overhead and roadmap clarity

- Commercial risk (pricing volatility and lock-in)

- Evidence quality and source freshness for every critical claim

- Operational readiness: ownership, onboarding, and incident response expectations

- Security/compliance mapping completeness before scaled rollout

- Internal link policy: include /collections, /compare, /alternatives, /latest in every decision guide.

Coding Resources candidates and tradeoff analysis

1. Get YouTube Thumbnails w/ Airtable Script · GitHub

Fact (2026-03-02): Get YouTube Thumbnails w/ Airtable Script · GitHub positions itself as follows: Get YouTube Thumbnails w/ Airtable Script. GitHub Gist: instantly share code, notes, and snippets.

Inference: Based on current metadata signals, Get YouTube Thumbnails w/ Airtable Script · GitHub is likely to perform best when developers looking for practical code references; teams aligning on frontend best practices

Recommendation: Pilot Get YouTube Thumbnails w/ Airtable Script · GitHub in one live workflow first, then scale only if adoption metrics and defect rates improve against baseline.

- Strength: Transparent implementation details with community-level auditability

- Strength: Potential to reduce repetitive tasks if guardrails are defined early

- Constraint: Documentation depth is not obvious from first-pass signals

- Integration check: Confirm whether automation hooks exist or if workarounds are needed.

- Governance check: Define access controls, data-retention boundaries, and audit expectations before launch.

- Not ideal for: Teams that cannot support process changes during the evaluation window.

Source URL: https://gist.github.com/nocodesupplyco/37d3730ab5b3ec60e153f6d256c35058

2. copaw

Fact (2026-03-02): copaw positions itself as follows: Your Personal AI Assistant; easy to install, deploy on your own machine or on the cloud; supports multiple chat apps with easily extensible capabilities. - agentscope-ai/CoPaw

Inference: Based on current metadata signals, copaw is likely to perform best when developers looking for practical code references; teams aligning on frontend best practices

Recommendation: Pilot copaw in one live workflow first, then scale only if adoption metrics and defect rates improve against baseline.

- Strength: Potential to reduce repetitive tasks if guardrails are defined early

- Constraint: Documentation depth is not obvious from first-pass signals

- Integration check: Confirm whether automation hooks exist or if workarounds are needed.

- Governance check: Define access controls, data-retention boundaries, and audit expectations before launch.

- Not ideal for: Teams that cannot support process changes during the evaluation window.

Source URL: https://github.com/agentscope-ai/copaw

3. Round Number to Nearest Integer · GitHub

Fact (2026-03-02): Round Number to Nearest Integer · GitHub positions itself as follows: Round Number to Nearest Integer. GitHub Gist: instantly share code, notes, and snippets.

Inference: Based on current metadata signals, Round Number to Nearest Integer · GitHub is likely to perform best when developers looking for practical code references; teams aligning on frontend best practices

Recommendation: Pilot Round Number to Nearest Integer · GitHub in one live workflow first, then scale only if adoption metrics and defect rates improve against baseline.

- Strength: Fits developer-first execution paths without heavy UI overhead

- Strength: Transparent implementation details with community-level auditability

- Constraint: Documentation depth is not obvious from first-pass signals

- Integration check: Confirm whether automation hooks exist or if workarounds are needed.

- Governance check: Define access controls, data-retention boundaries, and audit expectations before launch.

- Not ideal for: Teams that cannot support process changes during the evaluation window.

Source URL: https://gist.github.com/nocodesupplyco/a7aed0f6efecf01bd049324e92e2c8b7

4. Flowclass

Fact (2026-03-02): Flowclass positions itself as follows: Flowclass resource

Inference: Based on current metadata signals, Flowclass is likely to perform best when developers looking for practical code references; teams aligning on frontend best practices

Recommendation: Pilot Flowclass in one live workflow first, then scale only if adoption metrics and defect rates improve against baseline.

- Strength: Fits developer-first execution paths without heavy UI overhead

- Constraint: Documentation depth is not obvious from first-pass signals

- Integration check: Confirm whether automation hooks exist or if workarounds are needed.

- Governance check: Define access controls, data-retention boundaries, and audit expectations before launch.

- Not ideal for: Teams that cannot support process changes during the evaluation window.

Source URL: https://classes-for.webflow.io

5. pixel-agents

Fact (2026-03-02): pixel-agents positions itself as follows: Pixel office. Contribute to pablodelucca/pixel-agents development by creating an account on GitHub.

Inference: Based on current metadata signals, pixel-agents is likely to perform best when developers looking for practical code references; teams aligning on frontend best practices

Recommendation: Pilot pixel-agents in one live workflow first, then scale only if adoption metrics and defect rates improve against baseline.

- Strength: Transparent implementation details with community-level auditability

- Strength: Potential to reduce repetitive tasks if guardrails are defined early

- Constraint: Documentation depth is not obvious from first-pass signals

- Integration check: Confirm whether automation hooks exist or if workarounds are needed.

- Governance check: Define access controls, data-retention boundaries, and audit expectations before launch.

- Not ideal for: Teams that cannot support process changes during the evaluation window.

Source URL: https://github.com/pablodelucca/pixel-agents

6. kaku

Fact (2026-03-02): kaku positions itself as follows: 🎃 A fast, out-of-the-box terminal built for AI coding. - tw93/Kaku

Inference: Based on current metadata signals, kaku is likely to perform best when developers looking for practical code references; teams aligning on frontend best practices

Recommendation: Pilot kaku in one live workflow first, then scale only if adoption metrics and defect rates improve against baseline.

- Strength: Fits developer-first execution paths without heavy UI overhead

- Strength: Potential to reduce repetitive tasks if guardrails are defined early

- Constraint: Documentation depth is not obvious from first-pass signals

- Integration check: Confirm whether automation hooks exist or if workarounds are needed.

- Governance check: Define access controls, data-retention boundaries, and audit expectations before launch.

- Not ideal for: Teams that cannot support process changes during the evaluation window.

Source URL: https://github.com/tw93/kaku

7. Knockout - Figma to Webflow CSS Framework

Fact (2026-03-02): Knockout - Figma to Webflow CSS Framework positions itself as follows: Knockout resource

Inference: Based on current metadata signals, Knockout - Figma to Webflow CSS Framework is likely to perform best when developers looking for practical code references; teams aligning on frontend best practices

Recommendation: Pilot Knockout - Figma to Webflow CSS Framework in one live workflow first, then scale only if adoption metrics and defect rates improve against baseline.

- Strength: Fits developer-first execution paths without heavy UI overhead

- Constraint: Documentation depth is not obvious from first-pass signals

- Integration check: Confirm whether automation hooks exist or if workarounds are needed.

- Governance check: Define access controls, data-retention boundaries, and audit expectations before launch.

- Not ideal for: Teams that cannot support process changes during the evaluation window.

Source URL: https://madewithknockout.webflow.io

8. chrome-devtools-mcp

Fact (2026-03-02): chrome-devtools-mcp positions itself as follows: Chrome DevTools for coding agents. Contribute to ChromeDevTools/chrome-devtools-mcp development by creating an account on GitHub.

Inference: Based on current metadata signals, chrome-devtools-mcp is likely to perform best when developers looking for practical code references; teams aligning on frontend best practices

Recommendation: Pilot chrome-devtools-mcp in one live workflow first, then scale only if adoption metrics and defect rates improve against baseline.

- Strength: Fits developer-first execution paths without heavy UI overhead

- Strength: Transparent implementation details with community-level auditability

- Strength: Potential to reduce repetitive tasks if guardrails are defined early

- Constraint: Documentation depth is not obvious from first-pass signals

- Integration check: Confirm whether automation hooks exist or if workarounds are needed.

- Governance check: Define access controls, data-retention boundaries, and audit expectations before launch.

- Not ideal for: Teams that cannot support process changes during the evaluation window.

Source URL: https://github.com/chromedevtools/chrome-devtools-mcp

Integration and deployment reality checks

Inference: Most rollout failures occur at the integration layer (ownership gaps, weak fallback behavior, and missing review controls), not at the prompt layer.

- Recommendation: Define task-level prompt contracts for production-impacting workflows before enabling broad usage.

- Recommendation: Require human approval gates for changes that can affect production reliability, security, or billing.

- Recommendation: Log model/provider metadata for accepted outputs so review decisions are auditable.

- Recommendation: Maintain one fallback path and test failover behavior before full-team rollout.

Role-based recommendation paths

Engineering leaders

Fact (2026-03-02): Engineering leaders typically optimize for reliability, maintainability, and time-to-value under delivery pressure.

Recommendation: For coding resources, run scoped pilots with explicit rollback criteria and weekly instrumentation reviews before org-wide rollout.

Product and ops owners

Inference: Product and operations owners benefit most when tools reduce coordination overhead and shorten feedback loops between teams.

Recommendation: Require a clear owner, onboarding plan, and adoption rubric before approving expanded spend.

Security and governance stakeholders

Inference: Security teams generally need evidence of policy controls, access boundaries, and data handling paths before sign-off.

Recommendation: Complete a policy mapping checklist and document unresolved gaps prior to production rollout.

Execution plan and operating checklist

Days 1-30: baseline and pilot design

- Define baseline metrics (cycle time, defect escape rate, adoption rate, and support load).

- Run one bounded production pilot with clear success and rollback thresholds.

- Capture integration blockers, manual workarounds, and security questions in one backlog.

Days 31-60: controlled expansion

- Expand to a second workflow only after first-pilot KPIs show measurable improvement.

- Harden onboarding docs, usage guardrails, and incident playbooks from pilot learnings.

- Review commercial terms against projected usage to avoid surprise spend growth.

Days 61-90: governance and scale readiness

- Formalize ownership model, review cadence, and escalation paths for critical failures.

- Document migration path and fallback plan if pricing, roadmap, or reliability changes materially.

- Publish adoption scorecard and decision log for leadership visibility.

Cost model: optimize accepted outcomes, not raw prompt spend

Fact (2026-02-23): Low per-call pricing can still create higher total cost if acceptance rates are weak and review/rework overhead grows.

- Cost per accepted implementation change

- Cost per resolved debugging incident

- Prompt-to-merge cycle time

- Human rework time per accepted output

- Acceptance ratio by workflow domain

Source quality and citation policy

Fact (2026-03-02): This draft prioritizes first-party product documentation, official benchmark reports, and attributed visuals from high-authority domains.

- Every embedded visual includes alt text, source label, and source URL attribution.

- Time-sensitive statements use absolute dates and should be re-verified before publication.

- Unattributed social claims and low-authority aggregators are excluded from decision-critical sections.

- Policy: Use first-party docs, official benchmark reports, and attributed visuals for decision-critical claims. Re-verify time-sensitive claims before publication.

Common mistakes to avoid

- Selecting one tool globally before workflow-level validation.

- Approving rollout without baseline metrics and explicit success/failure thresholds.

- Ignoring fallback strategy and continuity planning for provider shifts.

- Comparing token pricing only, without tracking acceptance quality and rework overhead.

- Running pilots without assigning clear owner accountability and governance controls.

Where recommendations can fail

- Failure mode: no baseline metrics before pilot, making improvement claims unverifiable.

- Failure mode: rollout to entire org before validating integration reliability in one workflow.

- Failure mode: procurement decision made without ownership for maintenance and onboarding.

- Failure mode: ignoring migration plan if pricing or roadmap changes materially.

Implementation sequence (30/60/90 days)

Recommendation: Days 1-30 should define baseline metrics and run one scoped pilot with weekly review checkpoints.

Recommendation: Days 31-60 should expand to a second workflow only if pilot metrics improve and rollback path remains viable.

Recommendation: Days 61-90 should formalize governance, training, and cost controls before wider rollout.

Final recommendation

Inference: Teams that treat tool selection as an operational decision, not a novelty decision, usually see better long-term outcomes.

Recommendation: Publish this shortlist with sourced visuals, explicit tradeoff notes, and a freshness timestamp, then rerun validation before every major content refresh.

Methodology and source freshness

Fact (2026-03-02): Sources in this draft are first-party links captured during the current research cycle.

Fact (2026-03-02): Time-sensitive claims should be re-verified on 2026-03-02 before publication, including benchmark visuals and cited metrics.

FAQ

Is there one universal winner in coding resources?

No. Recommendation: assign primary tools by workflow domain, then keep one fallback option for continuity.

Should we standardize on one option for every team?

Inference: Standardizing too early can reduce adaptability. Most organizations perform better with a controlled primary-plus-fallback model.

How often should this comparison be refreshed?

Fact (2026-02-23): Re-validate quarterly, and also after major product updates, pricing changes, or policy shifts.

What should we measure during pilot evaluation?

Recommendation: measure accepted output quality, rework time, cycle-time impact, and governance fit by workflow.

Next Best Step

Get one high-signal tools brief per week

Weekly decisions for builders: what changed in AI and dev tooling, what to switch to, and which tools to avoid. One email. No noise.

Protected by reCAPTCHA. Google Privacy Policy and Terms of Service apply.

Or keep reading by intent

Sources & review

Reviewed on 3/2/2026

- Get YouTube Thumbnails w/ Airtable Script · GitHub official site

- copaw official site

- Round Number to Nearest Integer · GitHub official site

- Flowclass official site

- pixel-agents official site

- kaku official site

- Knockout - Figma to Webflow CSS Framework official site

- chrome-devtools-mcp official site

- woxi official site

- Redirect Form on Submit and Pass Input Value to URL · GitHub official site

- Stack Overflow: Developer Survey 2025 (AI)

- GitHub: Octoverse 2025