Best Design Tools for Teams (2026 Decision Guide)

Published on 3/2/2026

Last reviewed on 3/2/2026

By The Stash Editorial Team

Design Tools shortlist with fact/inference/recommendation framing, explicit tradeoffs, and source-backed implementation guidance for 2026.

Research snapshot

Read time

~11 min

Sections

18 major sections

Visuals

6 total (3 infographics)

Sources

12 cited references

Quick answer (2026-03-02): which design tools options should teams shortlist now?

Shortlist tools that show clear implementation signals, predictable maintenance burden, and explicit integration paths. Design stack choices compound quickly because they shape handoffs, feedback loops, and implementation speed. This guide is decision-first and optimized for high-intent evaluation workflows.

Quick verdict by scenario

Fact (2026-03-02): No single design tools option consistently wins every workflow. Teams generally perform better with workflow-specific primary tools and one fallback path.

- Recommendation: Choose Framer UI Kit and Design System — Kompa first when product designers building interface systems; cross-functional teams collaborating on ui.

- Recommendation: Choose FramerAuth — Create Member-Only Content in Framer first when product designers building interface systems; cross-functional teams collaborating on ui.

- Recommendation: Choose Geist Font first when product designers building interface systems; cross-functional teams collaborating on ui.

- Recommendation: Choose Markdrop – Visual Feedback Tool for Web Agencies & Designers first when product designers building interface systems; cross-functional teams collaborating on ui.

- Recommendation: Choose Pattern Playground first when product designers building interface systems; cross-functional teams collaborating on ui.

Inference: A primary-plus-fallback operating model usually reduces continuity risk when pricing, policy, or reliability conditions change.

Internal paths: /category/design-tools | /latest | /collections | /compare | /alternatives

Related guides: /collections | /latest | /compare | /alternatives | /collections | /compare

Authority brief and decision context

Fact (2026-03-02): Search intent is decision-stage evaluation for design tools with near-term implementation pressure.

Reader job-to-be-done: choose a tool that improves delivery speed without adding unbounded operational complexity.

Primary failure risk: selecting a tool on feature demos alone and discovering integration friction after rollout.

Topic coverage map for design tools

Inference: Decision-stage content is most useful when it spans architecture, adoption, governance, economics, and execution risk rather than only feature snapshots.

- Design system integration depth

- Handoff quality to engineering

- Collaboration and review loops

- Prototype fidelity and iteration speed

- Asset management and governance

- Migration complexity from current stack

- Pricing and seat-management tradeoffs

- Change management and enablement

Market evidence and visuals (2026-03-02)

Fact (2026-03-02): The visuals below are sourced from first-party benchmark reports to anchor this design tools evaluation in external evidence, not opinion alone.

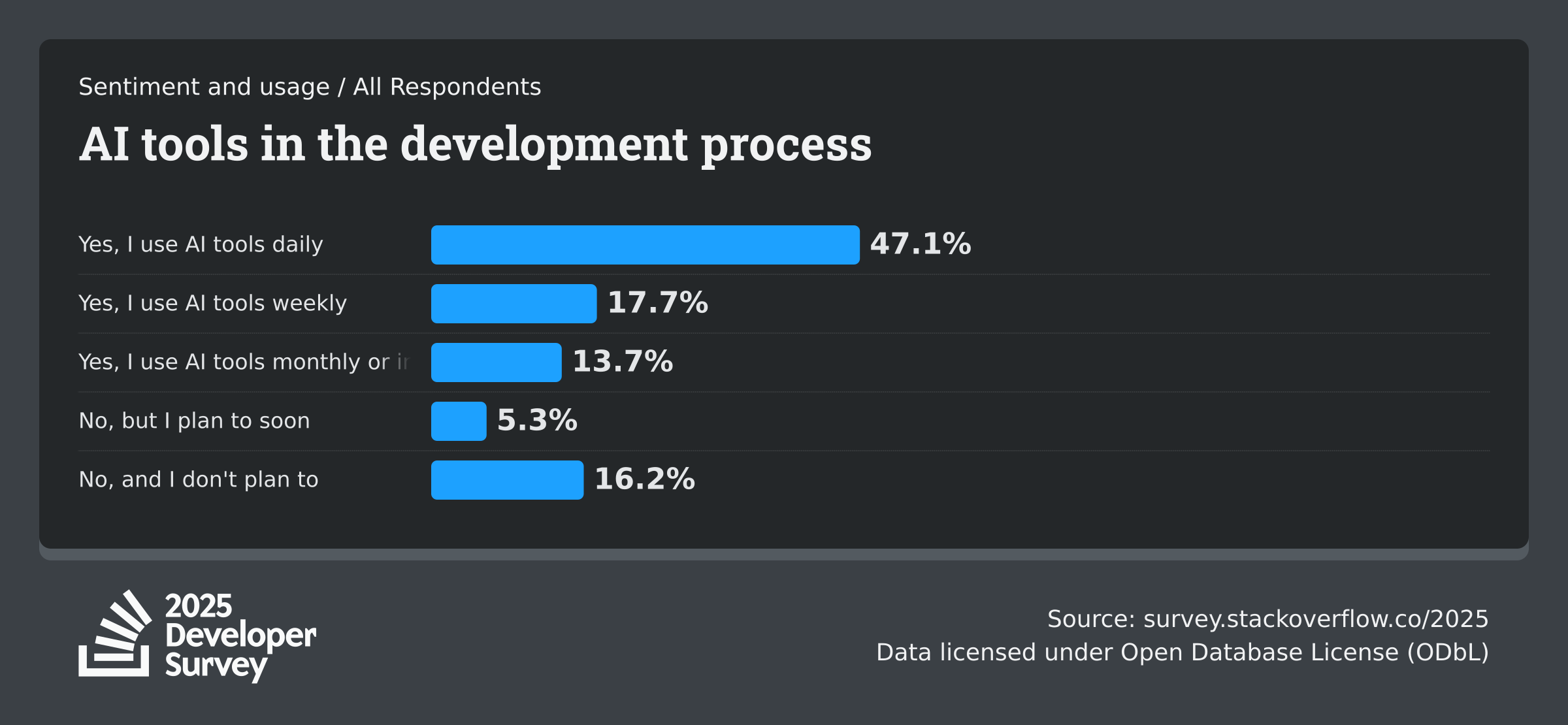

Stack Overflow - Developer Survey 2025 (AI)

Fact (2025-07-29): Annual developer sentiment dataset covering AI adoption, trust, and workflow impact.

GitHub - Octoverse 2025

Fact (2025-11-06): State-of-development report tracking developer growth and AI project adoption.

Google Cloud / DORA - DORA Report 2025

Fact (2025-01-01): Software delivery research on AI usage, platform engineering maturity, and delivery performance.

Evaluation criteria used in this draft

- Implementation effort and migration risk

- Integration depth across existing stack

- Time-to-value for first production workflow

- Governance controls and auditability

- Long-term maintenance overhead and roadmap clarity

- Commercial risk (pricing volatility and lock-in)

- Evidence quality and source freshness for every critical claim

- Operational readiness: ownership, onboarding, and incident response expectations

- Security/compliance mapping completeness before scaled rollout

- Internal link policy: include /collections, /compare, /alternatives, /latest in every decision guide.

Design Tools candidates and tradeoff analysis

1. Framer UI Kit and Design System — Kompa

Fact (2026-03-02): Framer UI Kit and Design System — Kompa positions itself as follows: Framer

Inference: Based on current metadata signals, Framer UI Kit and Design System — Kompa is likely to perform best when product designers building interface systems; cross-functional teams collaborating on ui

Recommendation: Pilot Framer UI Kit and Design System — Kompa in one live workflow first, then scale only if adoption metrics and defect rates improve against baseline.

- Strength: Shows enough implementation signal to justify a scoped pilot

- Constraint: Documentation depth is not obvious from first-pass signals

- Integration check: Confirm whether automation hooks exist or if workarounds are needed.

- Governance check: Define access controls, data-retention boundaries, and audit expectations before launch.

- Not ideal for: Users who only need basic image editing

Source URL: https://www.blocsui.com

2. FramerAuth — Create Member-Only Content in Framer

Fact (2026-03-02): FramerAuth — Create Member-Only Content in Framer positions itself as follows: Upgrade your Framer site with authentication, gated content, and paywalls to build a flourishing business or membership hub.

Inference: Based on current metadata signals, FramerAuth — Create Member-Only Content in Framer is likely to perform best when product designers building interface systems; cross-functional teams collaborating on ui

Recommendation: Pilot FramerAuth — Create Member-Only Content in Framer in one live workflow first, then scale only if adoption metrics and defect rates improve against baseline.

- Strength: Fits developer-first execution paths without heavy UI overhead

- Constraint: Documentation depth is not obvious from first-pass signals

- Integration check: Confirm whether automation hooks exist or if workarounds are needed.

- Governance check: Define access controls, data-retention boundaries, and audit expectations before launch.

- Not ideal for: Users who only need basic image editing

Source URL: https://framerauth.com

3. Geist Font

Fact (2026-03-02): Geist Font positions itself as follows: Geist is a typeface made for developers and designers, embodying Vercel's design principles of simplicity, minimalism, and speed, drawing inspiration from the renowned Swiss design movement.

Inference: Based on current metadata signals, Geist Font is likely to perform best when product designers building interface systems; cross-functional teams collaborating on ui

Recommendation: Pilot Geist Font in one live workflow first, then scale only if adoption metrics and defect rates improve against baseline.

- Strength: Fits developer-first execution paths without heavy UI overhead

- Constraint: Documentation depth is not obvious from first-pass signals

- Integration check: Confirm whether automation hooks exist or if workarounds are needed.

- Governance check: Define access controls, data-retention boundaries, and audit expectations before launch.

- Not ideal for: Users who only need basic image editing

Source URL: https://vercel.com/font

4. Markdrop – Visual Feedback Tool for Web Agencies & Designers

Fact (2026-03-02): Markdrop – Visual Feedback Tool for Web Agencies & Designers positions itself as follows: Collect client feedback on websites, images, and videos — all in one place. Built for agencies who want clearer reviews and fewer revision cycles.

Inference: Based on current metadata signals, Markdrop – Visual Feedback Tool for Web Agencies & Designers is likely to perform best when product designers building interface systems; cross-functional teams collaborating on ui

Recommendation: Pilot Markdrop – Visual Feedback Tool for Web Agencies & Designers in one live workflow first, then scale only if adoption metrics and defect rates improve against baseline.

- Strength: Fits developer-first execution paths without heavy UI overhead

- Constraint: Documentation depth is not obvious from first-pass signals

- Integration check: Confirm whether automation hooks exist or if workarounds are needed.

- Governance check: Define access controls, data-retention boundaries, and audit expectations before launch.

- Not ideal for: Users who only need basic image editing

Source URL: https://markdrop.app

5. Pattern Playground

Fact (2026-03-02): Pattern Playground positions itself as follows: Paletton: Create Harmonious Professional Color Schemes — design resource from Fountn

Inference: Based on current metadata signals, Pattern Playground is likely to perform best when product designers building interface systems; cross-functional teams collaborating on ui

Recommendation: Pilot Pattern Playground in one live workflow first, then scale only if adoption metrics and defect rates improve against baseline.

- Strength: Shows enough implementation signal to justify a scoped pilot

- Constraint: Documentation depth is not obvious from first-pass signals

- Integration check: Confirm whether automation hooks exist or if workarounds are needed.

- Governance check: Define access controls, data-retention boundaries, and audit expectations before launch.

- Not ideal for: Users who only need basic image editing

Source URL: https://patterns-three-livid.vercel.app

6. Niche Design

Fact (2026-03-02): Niche Design positions itself as follows: Niche Design: A Zine for Authentic Creativity — design resource from Fountn

Inference: Based on current metadata signals, Niche Design is likely to perform best when product designers building interface systems; cross-functional teams collaborating on ui

Recommendation: Pilot Niche Design in one live workflow first, then scale only if adoption metrics and defect rates improve against baseline.

- Strength: Shows enough implementation signal to justify a scoped pilot

- Constraint: Documentation depth is not obvious from first-pass signals

- Integration check: Confirm whether automation hooks exist or if workarounds are needed.

- Governance check: Define access controls, data-retention boundaries, and audit expectations before launch.

- Not ideal for: Users who only need basic image editing

Source URL: https://www.figma.com/community/widget/1582789587380074979/component-to-do-list

7. Niche design

Fact (2026-03-02): Niche design positions itself as follows: A zine for designers and product makers seeking meaning beyond metrics, inviting you to rethink our culture through the ways we design.

Inference: Based on current metadata signals, Niche design is likely to perform best when product designers building interface systems; cross-functional teams collaborating on ui

Recommendation: Pilot Niche design in one live workflow first, then scale only if adoption metrics and defect rates improve against baseline.

- Strength: Shows enough implementation signal to justify a scoped pilot

- Constraint: Documentation depth is not obvious from first-pass signals

- Integration check: Confirm whether automation hooks exist or if workarounds are needed.

- Governance check: Define access controls, data-retention boundaries, and audit expectations before launch.

- Not ideal for: Users who only need basic image editing

Source URL: https://nichedesign.press

8. Paletton - The Color Scheme Designer

Fact (2026-03-02): Paletton - The Color Scheme Designer positions itself as follows: In love with colors, since 2002. A designer tool for creating color combinations that work together well. Formerly known as Color Scheme Designer. Use the color wheel to create great color palettes.

Inference: Based on current metadata signals, Paletton - The Color Scheme Designer is likely to perform best when product designers building interface systems; cross-functional teams collaborating on ui

Recommendation: Pilot Paletton - The Color Scheme Designer in one live workflow first, then scale only if adoption metrics and defect rates improve against baseline.

- Strength: Shows enough implementation signal to justify a scoped pilot

- Constraint: Documentation depth is not obvious from first-pass signals

- Integration check: Confirm whether automation hooks exist or if workarounds are needed.

- Governance check: Define access controls, data-retention boundaries, and audit expectations before launch.

- Not ideal for: Users who only need basic image editing

Source URL: https://paletton.com

Integration and deployment reality checks

Inference: Most rollout failures occur at the integration layer (ownership gaps, weak fallback behavior, and missing review controls), not at the prompt layer.

- Recommendation: Define task-level prompt contracts for production-impacting workflows before enabling broad usage.

- Recommendation: Require human approval gates for changes that can affect production reliability, security, or billing.

- Recommendation: Log model/provider metadata for accepted outputs so review decisions are auditable.

- Recommendation: Maintain one fallback path and test failover behavior before full-team rollout.

Role-based recommendation paths

Engineering leaders

Fact (2026-03-02): Engineering leaders typically optimize for reliability, maintainability, and time-to-value under delivery pressure.

Recommendation: For design tools, run scoped pilots with explicit rollback criteria and weekly instrumentation reviews before org-wide rollout.

Product and ops owners

Inference: Product and operations owners benefit most when tools reduce coordination overhead and shorten feedback loops between teams.

Recommendation: Require a clear owner, onboarding plan, and adoption rubric before approving expanded spend.

Security and governance stakeholders

Inference: Security teams generally need evidence of policy controls, access boundaries, and data handling paths before sign-off.

Recommendation: Complete a policy mapping checklist and document unresolved gaps prior to production rollout.

Execution plan and operating checklist

Days 1-30: baseline and pilot design

- Define baseline metrics (cycle time, defect escape rate, adoption rate, and support load).

- Run one bounded production pilot with clear success and rollback thresholds.

- Capture integration blockers, manual workarounds, and security questions in one backlog.

Days 31-60: controlled expansion

- Expand to a second workflow only after first-pilot KPIs show measurable improvement.

- Harden onboarding docs, usage guardrails, and incident playbooks from pilot learnings.

- Review commercial terms against projected usage to avoid surprise spend growth.

Days 61-90: governance and scale readiness

- Formalize ownership model, review cadence, and escalation paths for critical failures.

- Document migration path and fallback plan if pricing, roadmap, or reliability changes materially.

- Publish adoption scorecard and decision log for leadership visibility.

Cost model: optimize accepted outcomes, not raw prompt spend

Fact (2026-02-23): Low per-call pricing can still create higher total cost if acceptance rates are weak and review/rework overhead grows.

- Cost per accepted implementation change

- Cost per resolved debugging incident

- Prompt-to-merge cycle time

- Human rework time per accepted output

- Acceptance ratio by workflow domain

Source quality and citation policy

Fact (2026-03-02): This draft prioritizes first-party product documentation, official benchmark reports, and attributed visuals from high-authority domains.

- Every embedded visual includes alt text, source label, and source URL attribution.

- Time-sensitive statements use absolute dates and should be re-verified before publication.

- Unattributed social claims and low-authority aggregators are excluded from decision-critical sections.

- Policy: Use first-party docs, official benchmark reports, and attributed visuals for decision-critical claims. Re-verify time-sensitive claims before publication.

Common mistakes to avoid

- Selecting one tool globally before workflow-level validation.

- Approving rollout without baseline metrics and explicit success/failure thresholds.

- Ignoring fallback strategy and continuity planning for provider shifts.

- Comparing token pricing only, without tracking acceptance quality and rework overhead.

- Running pilots without assigning clear owner accountability and governance controls.

Where recommendations can fail

- Failure mode: no baseline metrics before pilot, making improvement claims unverifiable.

- Failure mode: rollout to entire org before validating integration reliability in one workflow.

- Failure mode: procurement decision made without ownership for maintenance and onboarding.

- Failure mode: ignoring migration plan if pricing or roadmap changes materially.

Implementation sequence (30/60/90 days)

Recommendation: Days 1-30 should define baseline metrics and run one scoped pilot with weekly review checkpoints.

Recommendation: Days 31-60 should expand to a second workflow only if pilot metrics improve and rollback path remains viable.

Recommendation: Days 61-90 should formalize governance, training, and cost controls before wider rollout.

Final recommendation

Inference: Teams that treat tool selection as an operational decision, not a novelty decision, usually see better long-term outcomes.

Recommendation: Publish this shortlist with sourced visuals, explicit tradeoff notes, and a freshness timestamp, then rerun validation before every major content refresh.

Methodology and source freshness

Fact (2026-03-02): Sources in this draft are first-party links captured during the current research cycle.

Fact (2026-03-02): Time-sensitive claims should be re-verified on 2026-03-02 before publication, including benchmark visuals and cited metrics.

FAQ

Is there one universal winner in design tools?

No. Recommendation: assign primary tools by workflow domain, then keep one fallback option for continuity.

Should we standardize on one option for every team?

Inference: Standardizing too early can reduce adaptability. Most organizations perform better with a controlled primary-plus-fallback model.

How often should this comparison be refreshed?

Fact (2026-02-23): Re-validate quarterly, and also after major product updates, pricing changes, or policy shifts.

What should we measure during pilot evaluation?

Recommendation: measure accepted output quality, rework time, cycle-time impact, and governance fit by workflow.

Next Best Step

Get one high-signal tools brief per week

Weekly decisions for builders: what changed in AI and dev tooling, what to switch to, and which tools to avoid. One email. No noise.

Protected by reCAPTCHA. Google Privacy Policy and Terms of Service apply.

Or keep reading by intent

Sources & review

Reviewed on 3/2/2026

- Framer UI Kit and Design System — Kompa official site

- FramerAuth — Create Member-Only Content in Framer official site

- Geist Font official site

- Markdrop – Visual Feedback Tool for Web Agencies & Designers official site

- Pattern Playground official site

- Niche Design official site

- Niche design official site

- Paletton - The Color Scheme Designer official site

- React Aria official site

- Lessons of Design official site

- Stack Overflow: Developer Survey 2025 (AI)

- GitHub: Octoverse 2025